Previously: In Why Responsible AI is the Bedrock of AI-Powered Applications, I talked about why Responsible AI is so important. I gave examples of times when AI did not work as it should. These examples showed that AI can fail when it is not designed and tested correctly. In this post I want to talk about how to make sure your AI works the way you want it to.

Important Note: This blog is intended for informational and educational purposes only. It does not constitute legal advice and should not be relied upon as such. For any legal concerns related to AI compliance, data privacy, or regulatory obligations, please consult a qualified legal professional.

Understanding that Responsible AI is important is one thing. As I have learned over the last 2+ years, doing it right is another issue. Honestly, I had my doubts about how much overhead would be added when we built out our second PoC. But including Responsible AI at the start made it so much easier to design a safe AI feature rather than bolting on "add-ons" later on an as-needed basis.

There are several groups that describe the tenets of Responsible AI. Since I started with AWS' definitions,1 I am going to use these through this blog. They define Responsible AI to be eight interconnected parts, which act like a checklist validating the behavior of your AI feature. Your job as a product leader is to make deliberate choices about each one, rather than letting them happen by default. In the following section, I am going to cover each of the tenets, and describe how 1 or more of them might have been used to address the issues raised in each story described in the previous post.3

What is Responsible AI?

Responsible AI nudges you to ask a deceptively simple question: who will use the AI feature? It is a way to think about AI so that your clients feel safe using it; it is not a single feature or idea. The focus of Responsible AI is to ensure your AI feature is fair, transparent, explainable, and safe.

If the AI feature:

- works well for some people but not others, then you may have a bias issue

- works well when you test it in a controlled environment, but does not behave the way you want when a human is interacting with it, then you may have security, veracity and safety issues

- works well with static prompts during development, but generates results that you cannot understand or that are false, then you might have robustness and explainability issues

Responsible AI is focused on building AI-powered features people can trust, not about slowing down innovation.

The 8 Tenets of Responsible AI

Let's review the eight tenets of Responsible AI defined by AWS. Think of them as a checklist you run against every AI feature you either build or buy throughout its lifetime.

Fairness means your AI feature treats everyone equally, regardless of race, gender, age, socioeconomic status, etc. From the point of view of a Product Owner, you need to ensure that your design and prompt/response testing is representative of all the people using the AI-powered feature.

Explainability means that you can answer "why did the AI do that?" so that anyone can understand how a result was generated. There are two groups that need this information:

- your internal teams for data gathering and debugging

- your end users who want to know why a certain result was generated

Privacy and security means ensuring that personal data is:

- collected with informed consent

- used only for its stated purpose

- protected in transit and at rest

Here are a couple of things to consider when choosing a model:

- will the model use your data for training purposes; that is to train future versions of the model?

- will my prompts be directed to regions outside of the country where my data should not reside?

Safety means your AI feature can process harmful input without producing harmful outputs like: profanity, violence, misinformation, illegal advice, self-harm instructions, or content that endangers users.

Safety requires some form of guardrails like:

- input and output filters

- disallowed topics

- forbidden words or phrases

The Safety tenet requires continuous monitoring throughout the lifecycle of the AI feature even if the feature is safe at launch. Over time model updates, new user behavior patterns, or a deliberate adversarial prompt, may affect the behavior of the AI feature.

Controllability means you have the ability to:

- observe how the AI feature behaves

- intervene when it behaves unexpectedly

- adjust the behavior

- shut it down if necessary

From a Product Owner's point of view, the AI's behavior must remain consistent over time, and remain adaptable so that it can continue to represent the brand favorably.

Veracity means the AI produces accurate, truthful outputs whereas Robustness means the AI maintains that accuracy even when inputs are unusual, or deliberately malicious.

- An output that is factually wrong is an example of a common veracity issue called Hallucination. It most likely results when an LLM relies on its training data to "fill in the holes" when generating a response.

- An example of a Robustness issue is Prompt injection. This occurs when malicious input manipulates the model into ignoring its instructions. The result is the AI may not behave as intended like generating unprofessional responses that may upset the user.

Both of these issues can be mitigated through retrieval-augmented generation (RAG), output validation, fact-checking pipelines, and adversarial testing before deployment.

Governance means incorporating Responsible AI best practices into the design, development, and operation of your AI-powered features. As a Product Owner, this translates into establishing clear standards and processes for how your team builds and maintains AI features that use models.

In practice, Governance looks like:

- defining who owns the Responsible AI requirements for each AI feature

- including Responsible AI acceptance criteria in your definition of done

- conducting regular reviews of AI feature behavior as models and usage patterns evolve

- maintaining documentation of the decisions made

- ensuring your team understands the terms of the LLM provider you are using

Governance is how Responsible AI moves from intention to practice.

Transparency means everyone using the AI feature knows that they are interacting with an AI. The users will need to understand what the AI feature can and cannot do, and have enough information to make informed decisions about whether and how to engage with it.

Including a statement like:

- MyProduct-AI can make mistakes. Check important info.

- You are using AI technology.

Transparency is the foundation of informed consent, which in turn, is the foundation of trust.

Example: Why Responsible AI Is Needed

Consider an AI-powered customer support assistant that answers product questions for automobile parts. The users are either auto mechanics or automobile owners. If the assistant generates incorrect or misleading guidance, the user could make decisions that result in the wrong part being ordered or the wrong diagnosis to an engine noise. Without guardrails, monitoring, and validation, the AI may provide confident but incorrect responses, exposing both the user and the organization to risk and damage the brand.

This is where applying the tenets of Responsible AI becomes critical. If the wrong part were ordered based on the AI support assistant, then there would be delays to fixing the car. Similarly, if the wrong diagnosis is made, then the customer may spend more money on a problem that never gets fixed.

Monitoring user feedback for response accuracy, and then following the chain of thought via an observability tool, will help determine a path forward to re-establishing the correct behavior for the AI. That is, determine if there is a design issue, a coding problem, an LLM or Knowledge Base (KB) configuration issue, etc. Once identified, then the product team can update the AI.

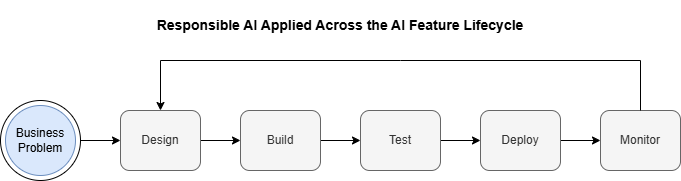

Figure 1: All of the tenets of Responsible AI are constantly applied for the entire lifecycle of the feature. Monitoring AI behavior and user feedback is key to ensuring the system continues to meet user needs.

A more detailed use case will be covered in the next post.

A Framework, Not a Finish Line

When I read the tenets of Responsible AI framework a while ago, the proverbial lightbulb turned on. First I found the Responsible AI framework to be practical; it just made sense. It identified issues that would keep a Product Owner up at night like hidden biases, malicious prompting, etc. Second, applying the tenets on Day 0 directed the design to mandate features like Guardrails, and selecting and configuring the right LLM. Third, the tenets become product requirements that can be tested, and audited via KPIs.

The eight tenets are not independent either. They reinforce each other. An AI feature with strong Governance produces better Transparency. An AI feature with rigorous Veracity supports Explainability. An AI feature built with Fairness in mind tends to be more Robust.

The organizations that get this right are ones that treat Responsible AI as a product discipline. They will assign owners, metrics, milestones, and accountability to the tenets of Responsible AI just like any other feature.

As I conclude, I want to share that I have designed, developed, and deployed AI features as both PoCs (Proof-of-Concepts) and MVPs (Minimal Viable Products). One of the most important lessons I learned is that the time to think about Responsible AI is before you write a single line of code. I believe that each of the failures described in the previous post could have been prevented by simply asking the right questions on Day 0.

Putting my Product Owner hat on for a moment, here is an action list that might help:

- identify the relevant tenets for the use case

- assign an owner to each

- convert them into acceptance criteria

- monitor them after launch

Up Next: In the next post, we walk through a simulated scenario showing what happens when Responsible AI is not applied — and how to fix it in practice. Read the next post →